import os

from transformers import AutoTokenizer, AutoModelForQuestionAnswering, pipeline

os.environ["HF_MODELS_HOME"] = "E:\\data\\ai_model\\"

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

# 指定本地模型路径

local_model_path = "E:\\data\\ai_model\\"

def pipeline_question1():

# 加载预训练模型和分词器

model_name = local_model_path + "distilbert-base-uncased-distilled-squad"

# 加载本地模型和分词器,确保 local_files_only=True

tokenizer = AutoTokenizer.from_pretrained(model_name, local_files_only=True)

model = AutoModelForQuestionAnswering.from_pretrained(model_name, local_files_only=True)

# 创建问答pipeline

qa_pipeline = pipeline("question-answering", model=model, tokenizer=tokenizer)

# 定义上下文和问题

context = "The Apollo program was the third United States human spaceflight program carried out by the National Aeronautics and Space Administration (NASA), which succeeded in landing the first humans on the Moon from 1969 to 1972."

question = "What was the Apollo program?"

# 使用pipeline进行问答

result = qa_pipeline(question=question, context=context)

# 打印结果

print(f"Question: {question}")

print(f"Answer: {result['answer']}")

if __name__ == '__main__':

pipeline_question1()

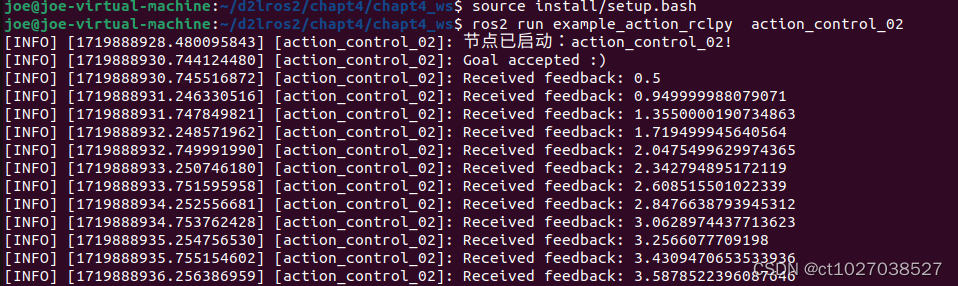

执行结果

Question: What was the Apollo program?

Answer: the third United States human spaceflight program